The linux-kernel-enriched-corpus repo has been quietly collecting syzkaller reproducers for years, one program per file, refreshed daily. I've used them to improve syzkaller's coverage numbers in published benchmarks, and to find a handful of CVEs. I finally got time to look deeply into it.

So a few weekends ago I wrotevibe-coded a small tool called kmap that parses every program in the repo into SQLite, scrapes the syzbot bug page for each, and lets me poke at the result. This post is a tour of what's in there.

the shape of the pile

Before anything else, the basics:

- 68,424 reproducers

- 6,251 distinct syzbot bugs behind them

- 314,629 individual syscall invocations

- 360 distinct syscall names, but 3,369 distinct syscall variants once you include the

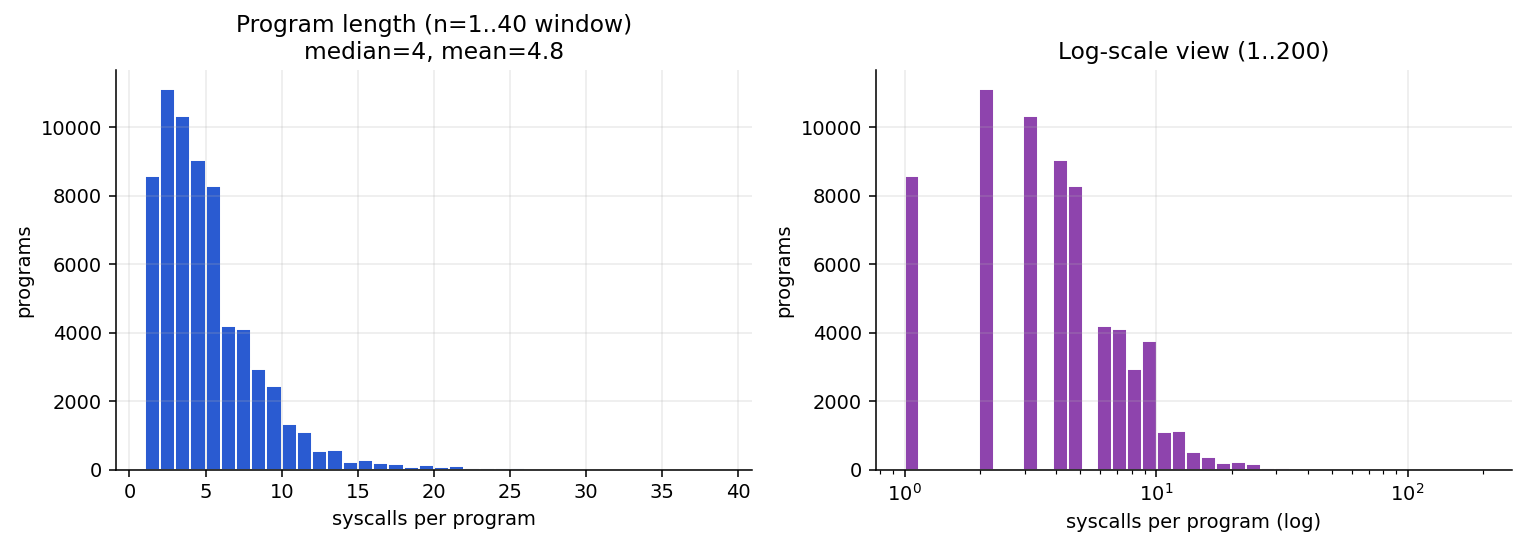

socket$nl_netfilter-style subtyping - Median program length: four syscalls

That last number is the one that surprised me. If you've only seen syzkaller reproducers in a crash report, you probably picture them as big, intimidating sequences. Syzbot's minimizer is very good. By the time a bug has been fixed and the repro has been identified, it usually takes three to five syscalls to trigger. Half the programs in the corpus have four calls or fewer.

Only 468 programs have more than 20 syscalls, and only 50 have more than 50. The longest program in the corpus is 763 syscalls and covers 318 distinct variants. It's essentially a whole fuzz session that resisted minimization, hanging off a KMSAN bug in ip_tunnel_lookup. Bugs that need a lot of state to trigger don't get simpler.

where the bugs live

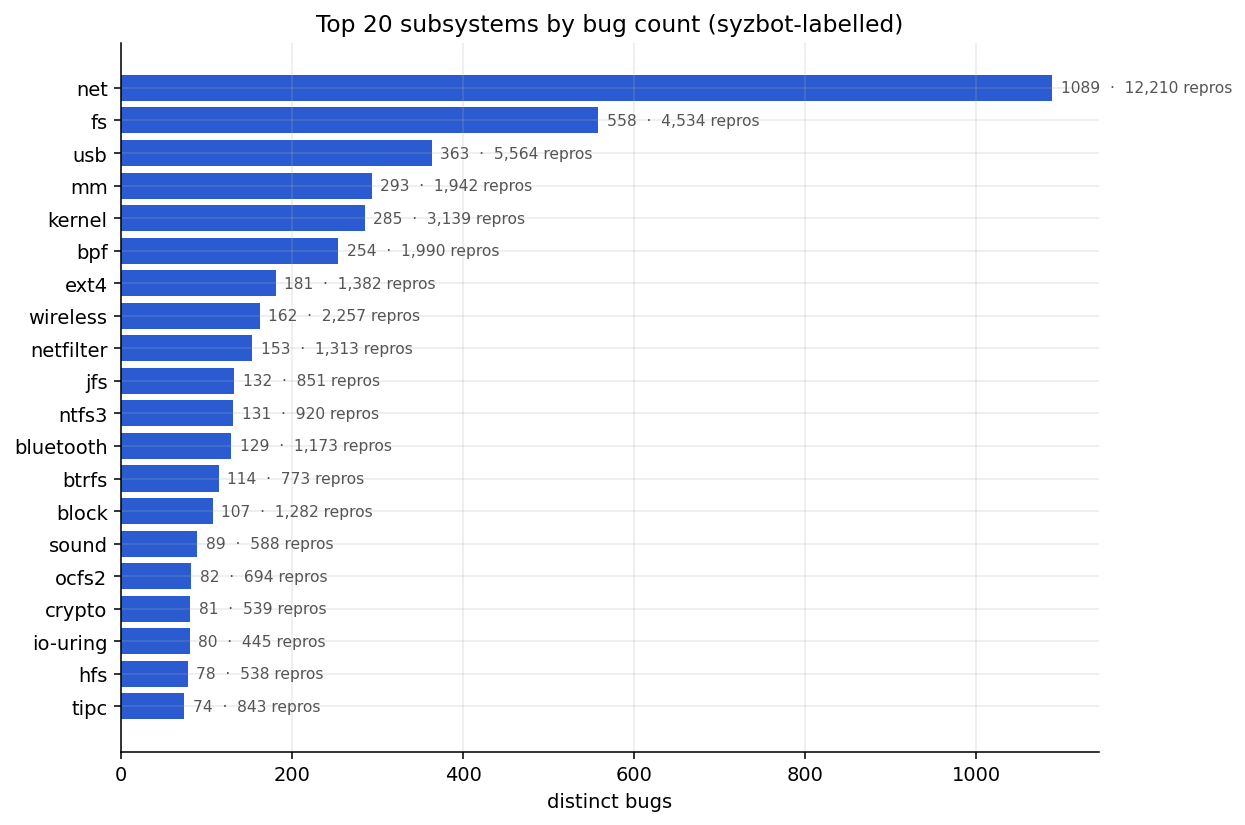

Networking dominates the subsystems. 1,089 distinct net bugs across 12,210 reproducers. After net comes a pack of usual suspects: filesystems (fs, ext4, jfs, ntfs3, btrfs, and a long tail of legacy FS), USB, memory management, BPF, the Bluetooth and wireless stacks.

Two things worth calling out here.

The first is the ratio of reproducers to bugs. USB has 363 bugs behind 5,564 reproducers which is about 15 programs per bug. That's because the USB harness generates near-duplicate syz_usb_connect + syz_usb_control_io sequences for almost every root cause, and syzbot doesn't dedupe at the program level. In contrast, fs has 558 bugs across only 4,534 reproducers, about 8 per bug. Filesystem bugs need specific byte patterns in a mount image, not long call sequences, so the same root cause doesn't spawn as many variants.

The second is that some filesystems are overrepresented relative to their real-world use. JFS and NTFS3 together account for 263 bugs, more than ext4's 181. Neither is an actively used filesystem, and why I think they have so many bugs: weak input validation, small maintenance footprint, and a syz harness (syz_mount_image$<fs>) that feeds them crafted block-device images as aggressively as it feeds ext4.

crash classes and a heatmap

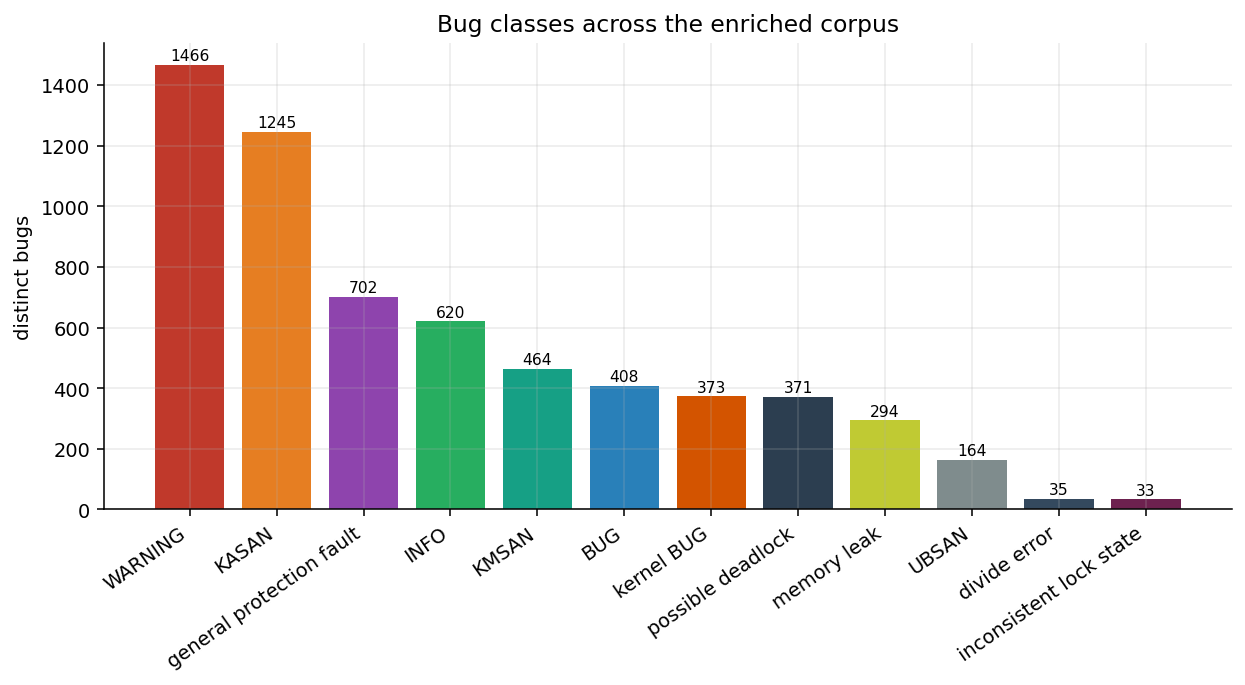

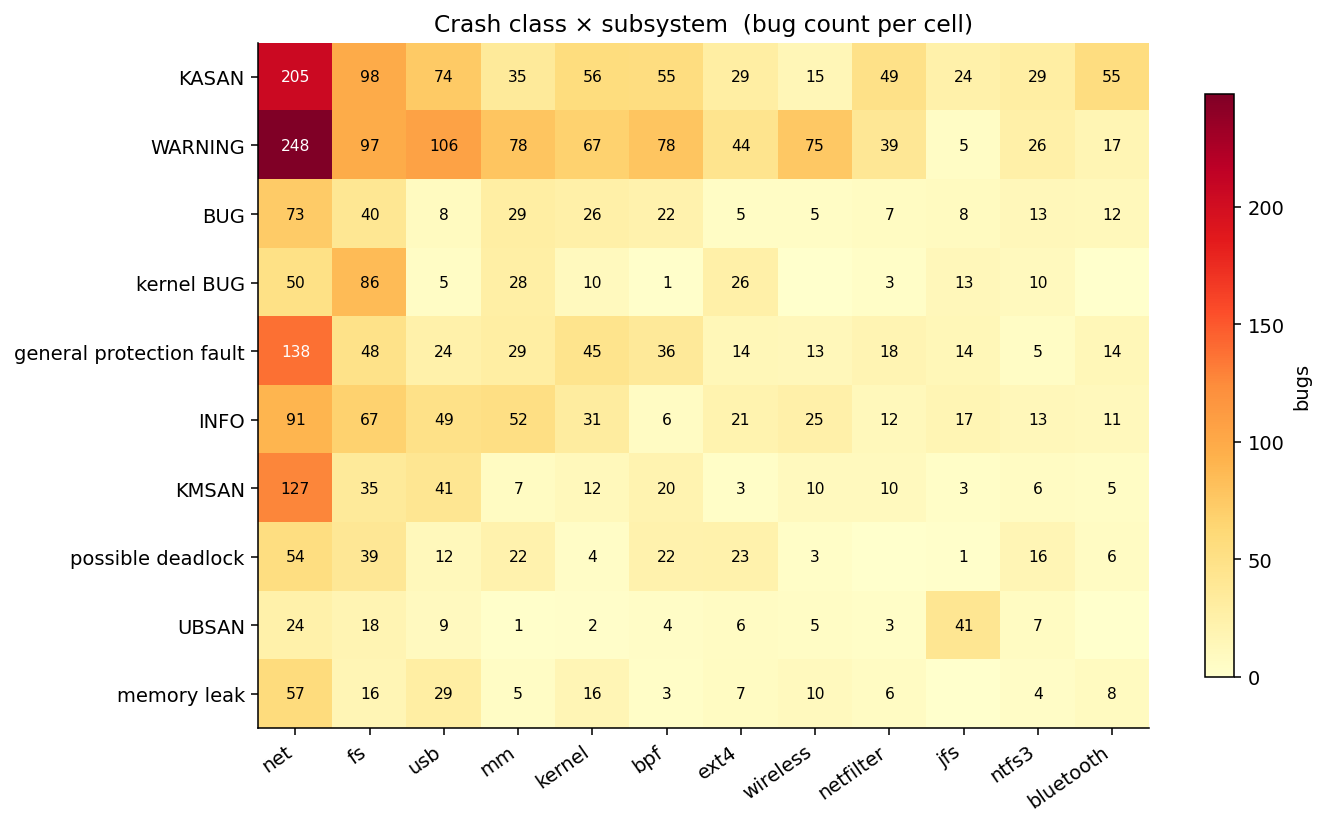

WARNING and KASAN together make up 43% of the bugs. These are the two classes syzbot's oracles are tuned hardest for, so they're going to dominate anything like this. Below them comes a long second tier: general-protection-fault (702 bugs, effectively "someone dereferenced a trashed or NULL pointer somewhere KASAN couldn't see it"), INFO (stall/hang warnings), KMSAN (464), BUG, possible-deadlock, memory-leak, UBSAN.

The more interesting chart is the crash-class-by-subsystem heatmap:

A few cells jump out if you know what you're looking at.

UBSAN lands in JFS way out of proportion to anything else. Forty-one UBSAN bugs in JFS alone, more than every other subsystem in this top-12 view combined. Almost all of them are shift-out-of-bounds wherein JFS reads a bit-width field from the on-disk superblock and shifts a value by it without validating.

KMSAN is a networking demon(or may be optimized for it). 127 KMSAN bugs in net, against at most 41 anywhere else. Netlink parsers and skb-touching code leak uninitialized bytes more than any other code in the kernel. This is partly because the netlink attribute format makes "did I actually read this field?" hard to get right, and because the sheer surface area of the net stack dwarfs everything else. Networking go brr.

NTFS3 and JFS both index high on "kernel BUG" and KASAN but low on WARNING. These are filesystems where the driver doesn't defensively WARN_ON bad state; it just crashes. Ext4 is almost the opposite; lots of WARNINGs, fewer BUGs. I interpret that as "ext4 has been hardened enough that bugs fail loudly but not fatally."

USB is heavy on WARNING and memory-leak, light on BUG. The pattern there is about hot-unplug paths, i.e things that leak or warn when a device disappears mid-enumeration, which is what syz_usb_connect is designed to simulate.

Bug hunters already know where the good opportunities lie :)

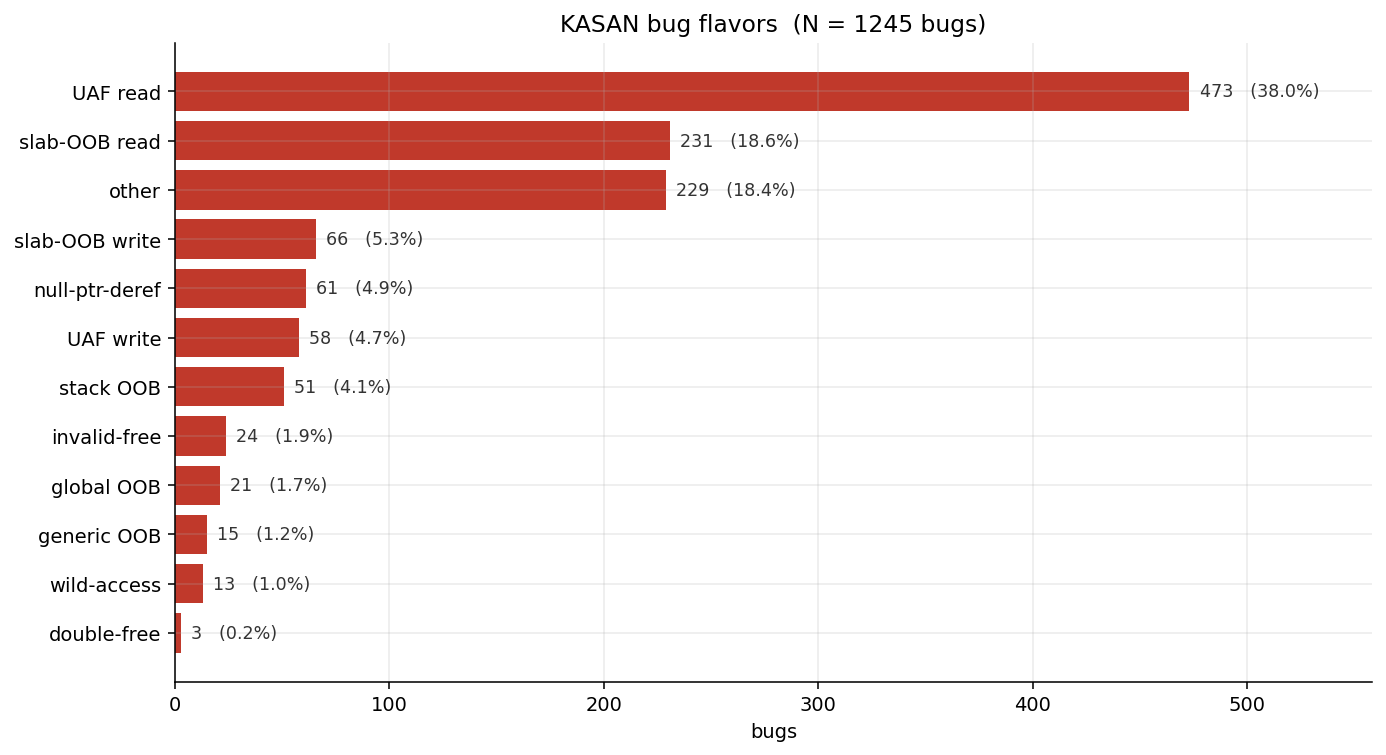

the texture of KASAN bugs

Within the 1,245 KASAN bugs, use-after-free reads are 38%. Slab-out-of-bounds reads are 19%. Between them that's more than half of all memory-safety bugs in the corpus. UAF writes are only roughly 8-to-1 ratio(~5%) of reads to writes, which matches the intuition I've had for years but had never actually counted: kernel UAF reads are common, UAF writes are rare, and the exploit-development gap between them is enormous.

The tiny wild-memory-access slice (13 bugs) is the scariest category. The accesses to pointers that are so corrupted KASAN can't even tell you which allocation they came from. Those almost always involve a type confusion or a uninit struct embedded somewhere else.

the shape of a bug

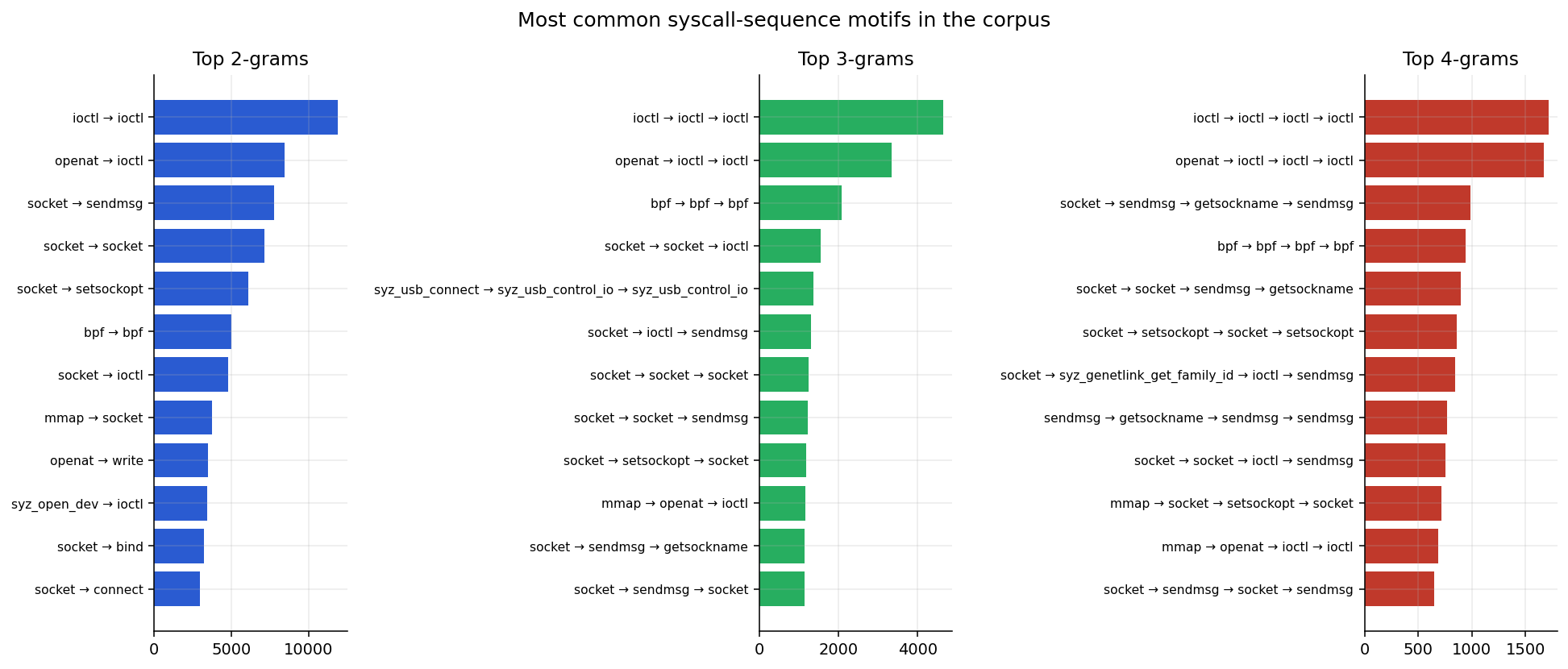

The part of this I find the most fun is the motif analysis(and I shared a preview on X). For every reproducer I extract the syscall-name sequence and count all n-grams across the corpus. Here are the top 2-, 3-, and 4-grams:

A few things:

The single most common 4-gram in the corpus is ioctl → ioctl → ioctl → ioctl, with 1,713 occurrences. The next most common is openat → ioctl → ioctl → ioctl. Kernel driver bugs live inside ioctl state machines. You open a device, you drive it through a series of ioctls, and somewhere around the third or fourth call a contract breaks. This information should radically help someone fuzzing their device driver :))

BPF has its own motif universe. bpf → bpf → bpf → bpf (940 occurrences). map creation, program loading, attachment, map updates is the second-most-common four-call shape after ioctls. BPF bugs live in the interactions between those steps.

Networking splits into two motifs. The setsockopt-heavy one (socket → setsockopt → socket → setsockopt) is mostly protocol configuration bugs. The sendmsg-heavy one (socket → sendmsg → getsockname → sendmsg) is mostly netlink and packet-socket parsing bugs. You can tell them apart visually in the 4-gram chart.

And then there's the USB stack, which shows up as syz_usb_connect → syz_usb_control_io → syz_usb_control_io at 1,363 occurrences. If you are fuzzing USB drivers and your fuzzer can't emit that exact prefix, it will find nothing.

This info should help researchers use the right grammar for the right results.

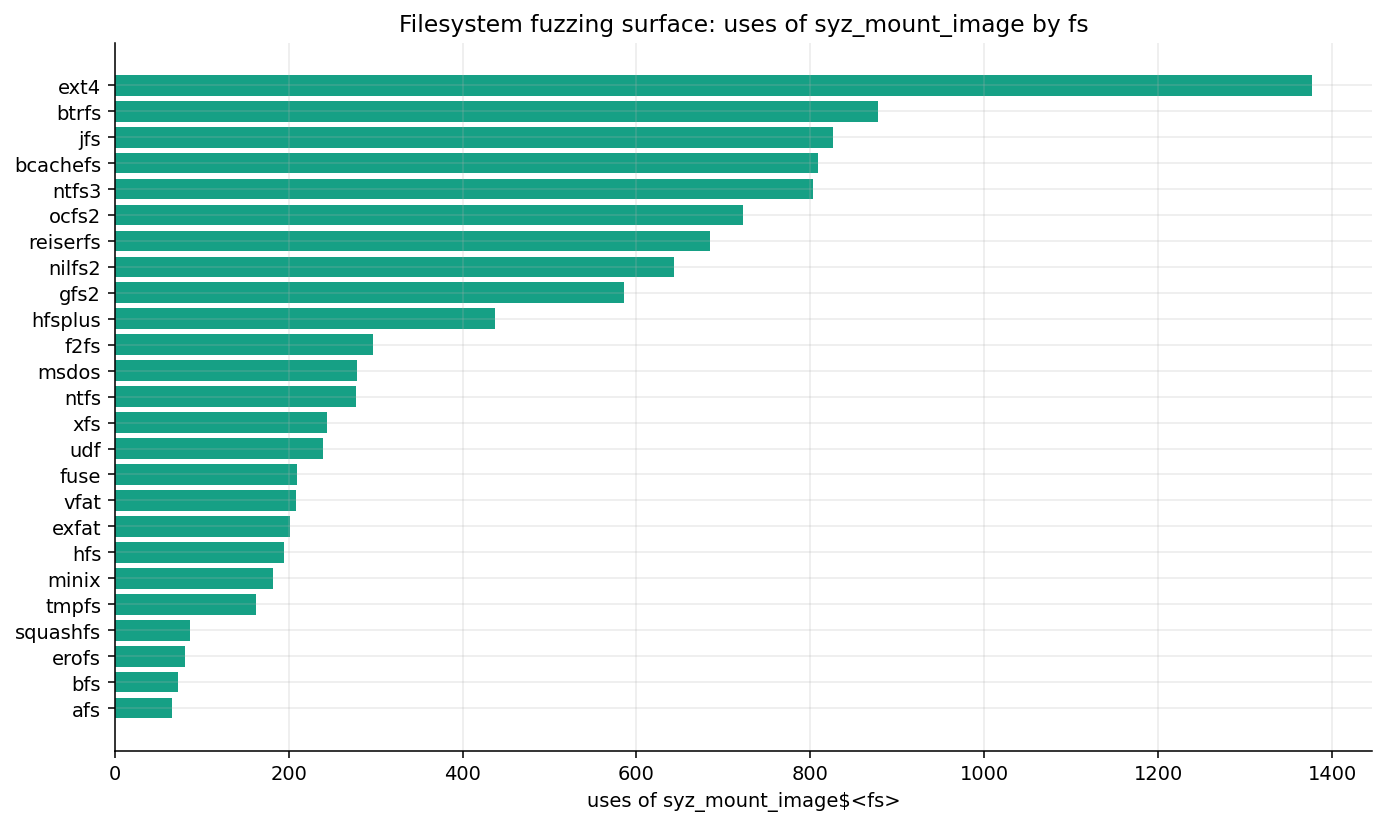

filesystems are a superset of syz_mount_image

If you're specifically interested in filesystem fuzzing, this is the chart:

Ext4 leads, predictably. But at the second tier: btrfs, jfs, bcachefs, ntfs3, ocfs2, reiserfs, nilfs2, gfs2 are all clustered between 600 and 900 uses. These are the maintained but under-audited filesystems. Each has a rich feature set and a lean history of defensive coding.

The long tail is where it gets interesting. hfsplus, f2fs, msdos, ntfs, xfs, udf sit in the 200-450 range. And then afs, sysv, iso9660, jffs2, hpfs, cramfs, v7, befs, pvfs2, qnx6, romfs each get under 100 uses. Many of these are legacy filesystems still in the kernel tree. Bugs in these legacy filesystems are disproportionately likely to be interesting because they sit in old parsing code with low audit pressure.

For ROI for time invested, it should guide you to the right filesystem. I personally want to review bcachefs sometime.

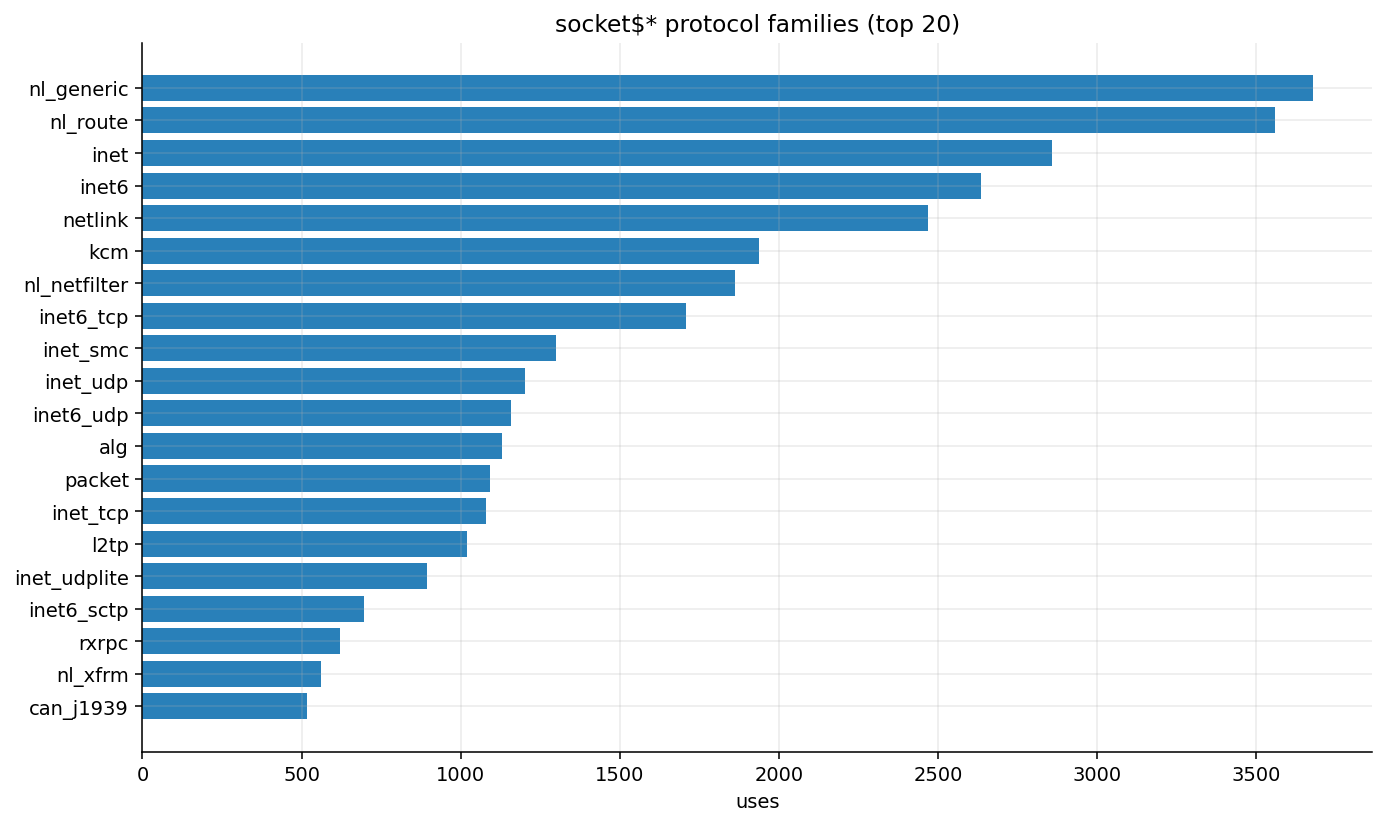

networking is netlink

The top seven socket$* variants: nl_generic, nl_route, inet, inet6, netlink, kcm, nl_netfilter are all either direct netlink sockets or have netlink as their primary control plane. inet and inet6 aren't netlink sockets, but by the time a bug in an inet socket surfaces it's usually because something configured via rtnetlink created a weird state.

Netlink is the kernel's configuration bus. Its parsers are where the bugs live. This is the same observation as the KMSAN concentration in net.

the long tail matters

Here's something that doesn't get a chart but matters a lot: 972 of the 3,369 distinct syscall variants (28.9%) appear in three or fewer programs. Things like accept4$x25, arch_prctl$ARCH_SHSTK_LOCK, bpf$auto_BPF_MAP_FREEZE, execve$auto. The weirdos.

These are the programs you should be most careful with when you're building a minimized seed corpus. When I build a small seed set out of this corpus, I keep the singleton-variant programs even if the coverage math says I shouldn't.

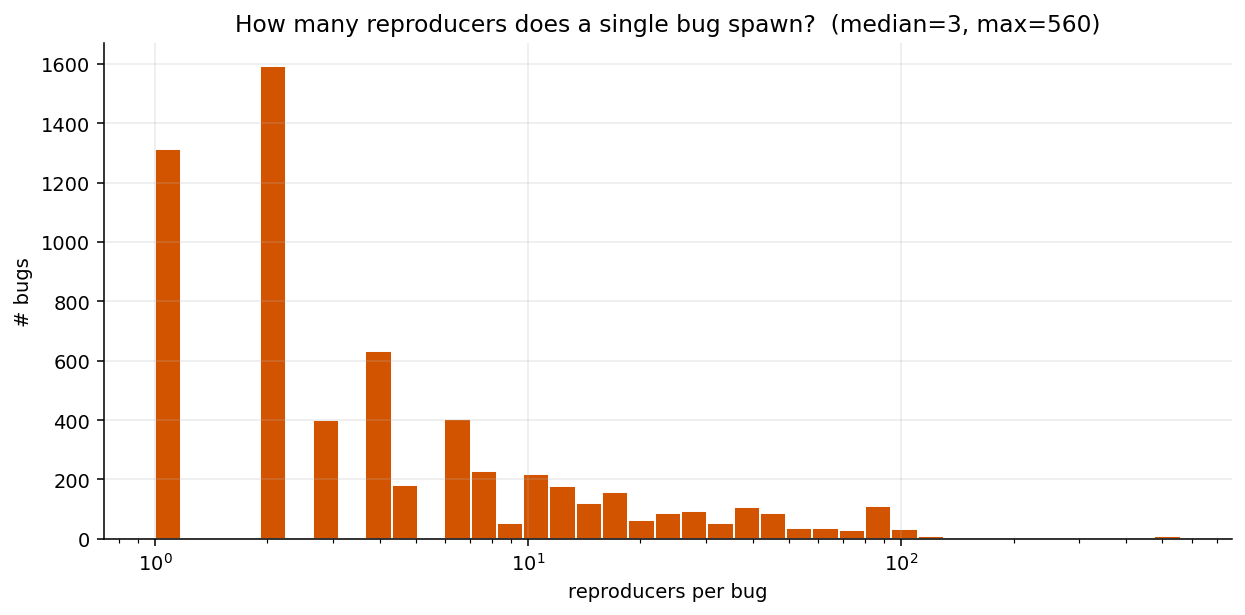

how many reproducers does a bug get?

Median is three. But 21% of bugs (1,313 of them) have exactly one reproducer. And at the other end, five different bugs are tied at the ceiling of 560 reproducers each. A KASAN UAF in __schedule, a GPF in pppol2tp_connect, a deadlock in rtnl_lock, one in kernel_sock_shutdown, and a WARNING in smc_unhash_sk.

Those 560-repro bugs are evidence of syzbot catching the same underlying defect hundreds of times from slightly different angles before the fix landed. They're also where the median hides the real shape of the distribution. The median is three, but the mean is something more like eleven because of those outliers.

For fuzzing purposes, the single-reproducer bugs are the most valuable part of the corpus.Those are the 1,313 seeds we want in a minimum-viable seed set (ideally).

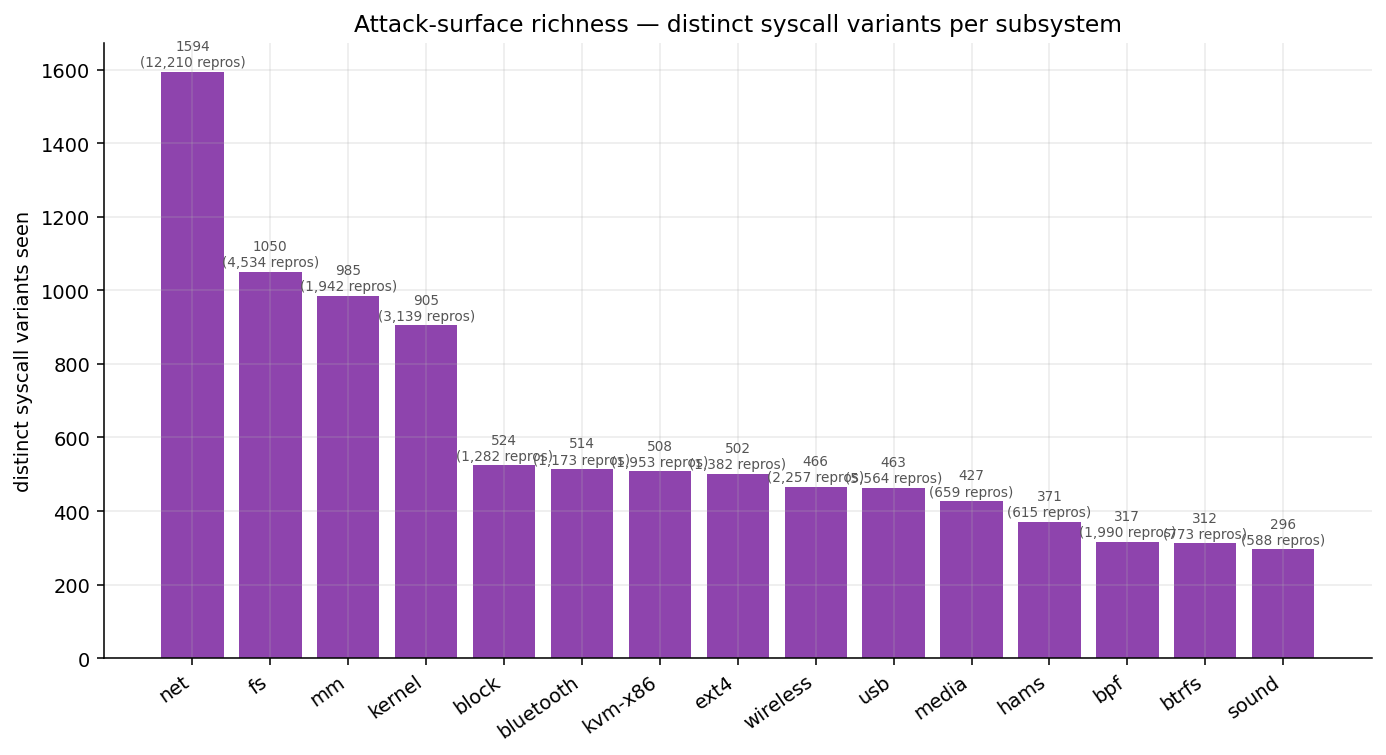

attack-surface richness

Last chart:

This is the number of distinct syscall variants that show up in each subsystem's reproducers. It's a rough proxy for "how wide is the API that touches this subsystem."

Net is the runaway leader at 1,594 distinct variants. This is both the protocol-family breadth (every new protocol multiplies variants by N, because setsockopt and sendmsg all get $<family> suffixes) and the general "net has a lot of syscalls" story. Fs, mm, and kernel form the next tier. Then a gap, and then block, bluetooth, kvm-x86, ext4, wireless, usb, media, hams all sit in the 370-520 range.

You can read this two ways. Either "net is the biggest attack surface by a wide margin," which is true, or "media and hams have 400+ variants on ~650 programs, which means each program hits an unusually large number of unique syscalls which means the calls-per-program density is high." Both are right, and they imply different bug hunting strategies. For net, breadth is the game. For media or hams, you need deep per-module setup.

what I'd do differently if I were fuzzing today

A few things I'd take from this:

- Start with the 3-gram priors.

ioctl → ioctl → ioctlis 4,642 historical bugs worth of prior knowledge. Leverage the high impact grammar structures and define those as new templates. - JFS, NTFS3, and bcachefs are overproducing bugs relative to how much attention they get.

- KMSAN + netlink is a gold mine and almost certainly not played out. 127 bugs in net alone, and the netlink parser surface keeps growing. Although, the codebase is insane, so some kind of LLM play is in order.

- Short programs outnumber long ones ~100:1. A bug-finding oracle does not need to generate 50-syscall sequences. The median useful program is four calls long. I should really look into the bonsai fuzzing stuff again with this motivation.

what this dataset doesn't tell you

Worth being honest:

The corpus is labels and programs. There's no coverage data in the repo, so my "attack surface" analysis is a proxy built on distinct syscall variants, not on actual basic-block coverage. If you want the real coverage picture you need to run the programs against an instrumented kernel, which is doable but out of scope for a weekend project. Also I haven't looked any exploits/bug repros from outside of syzbot.

There's no first-report date, only a fixed date. So I don't know "are ntfs3 bugs being found faster in 2026 than 2025?" from this data alone. Syzbot tracks that, but not in the bug-page metadata I scraped, and I'm lazy.

Counts are over syzbot-labeled bugs and reproducers, not unique root causes. Subsystem labels are inherited from syzbot and are noisy. Syscall-variant richness is a proxy for API breadth, not measured basic-block coverage.

the tool

All of the above came out of a tool I wrote called kmap. It's a small Flask app backed by SQLite. The code is in the kmap repo.

To run it:

pip install flask requests beautifulsoup4

python3 -m kmap.index # parse files/ into kmap.db, ~2 min for 68k programs

python3 -m kmap.scrape # fetch syzbot metadata, rate-limited, ~1.5 hrs full

python3 -m kmap.app # http://127.0.0.1:5057

I probably won't keep building on this. But if there's a question I didn't answer in this post that you think the data should be able to answer, I'd like to hear about it (@oswalpalash on X).